I think for most people, the term “big data” has little specific meaning, it’s just one of those things like “machine learning” and “data science” that mean computers are taking over. A recent paper (Samuel Bray and Bo Wang, “Forecasting unprecedented ecological fluctuations,” PLOS Computational Biology) illustrates the literal meaning. The authors take advantage of recently available “big data” biological datasets—ecological systems sampled at high frequency over long periods of time—to “tackle the grand challenge” of forecasting “rare large-amplitude ‘Black Swan’ fluctuation events” with “significant ecological and economic impact.“

Can study of plankton, forests and marine mollusks help predict financial crises? Traditional biology and economics focused on equilibrium relations, which are usually the only kind that can be studied when you have only a few data points—like quarterly GDP growth or annual population surveys over a few decades. But to quote John Maynard Keynes, “Economists set themselves too easy, too useless a task if in tempestuous seasons they can only tell us that when the storm is long past the ocean is flat again.” The equivalent prediction by a population biologist would be, “The ecosystem may undergo a sudden dramatic change, but when it’s over, I expect surviving and new species populations will stabilize at new levels.”

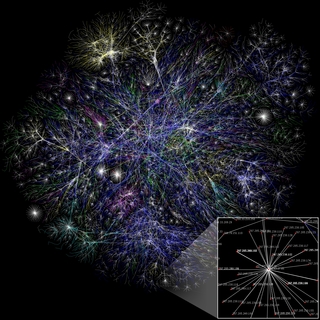

Big-data advocates claim they don’t need deep study of the specifics of economics or biology or any other field they wish to predict, that more and better data are sufficient. There are an infinite number of things that can cause crises, so it’s pointless to try to think of them all. But there seem to be a limited number of mathematical ways crises play out, so you don’t need to know the cause to predict the outcome. Studying the smallest and briefest changes, if you have enough of them measured with enough precision, can predict the evolution of the largest changes.

Nassim Taleb who coined the modern usage of “Black Swan,” disagrees. He claims long-term outcomes are dominated by rare large events that happen because they are unexpected. Prediction is pointless because if successful it makes the event expected, and therefore some other Black Swan happens instead.

For example, before 9/11 we knew there were airplane hijackers, but previous ones had wanted to survive. We knew there were suicide bombers, but previous ones had been low-skill people with simple plans operating close to home. But if someone had anticipated high-skill suicide hijackers with complex plans operating across thousands of miles, we would have taken precautions, and the terrorists would have done something else.

Taleb tells the story of the hypothetical legislator who foresaw the possibility of 9/11 and pushed a law requiring locks on cockpit doors. This prevents 9/11, so of course the locks are deemed to be useless overregulation. Perhaps some bad event occurs because a lock jams, or flights are delayed due to lost keys. Business groups contribute to the legislator’s opponent, leading to her defeat. There could thousands of people who prevented terrible Black Swans and were rewarded with defeat and obscurity, while the people who over-reacted afterward are showered with wealth and power.

We do in fact have a Black Swan forecasting system, Hollywood disaster movies. Like the authors of this paper, they take small scale events—say lines for gasoline and electricity blackouts during the 1970s oil crisis—and use them to predict large events—like the Mad Max franchise. A depressing amount of security arrangements are designed to prevent wildly implausible disaster movie scenarios instead of being sensible general precautions that do not rely on specific predictions. An equally depressing amount of financial regulation is designed to prevent the last disaster; like generals who are always prepared to fight the last war; when the next war is more likely to be a reaction to the last war than a repeat of it.

In fairness to the authors, they recommend a more rational and systematic forecasting technique than screenwriters trying to guess what will scare the public most. But the basic question applies. Are big events caused by the same forces that drive day-to-day changes? Or are they reactions to previous and distant big events? Does a sudden dramatic change in an ecosystem result from an unstable system randomly drifting to a crisis point? Or is there some longer-term evolutionary principle at work that builds punctuation into equilibrium via natural selection?

These are big questions, and ancient ones. They are important in almost every field of human study. They have been argued in finance as far back as the early 1960s when Benoit Mandelbrot, the father of chaos theory, taught Eugene Fama, the father of efficient markets (and like biology 60 years later, it was the availability of big data sets—tick-by-tick cotton prices for Mandelbrot, and long-term daily stock price histories for Fama). Big data has stepped up to the plate to answer them, but has yet to take a big swing, much less hit the ball out of the park.

Read Full Article »